Object Storage (Weather)

No kdb+ knowledge required

This example assumes no prior experience with q/kdb+ and you can replace the url provided with any other Object Storage url to gain similar results.

Object storage is a means of storage for unstructured data, eliminating the scaling limitations of traditional file storage. Limitless scale is the reason object storage is the storage of the cloud; Amazon, Google and Microsoft all employ object storage as their primary storage.

The walkthrough offers a sample weather dataset hosted on each of the major cloud providers. Select one of the cloud providers and enter the relevant details for that provider into the first input form.

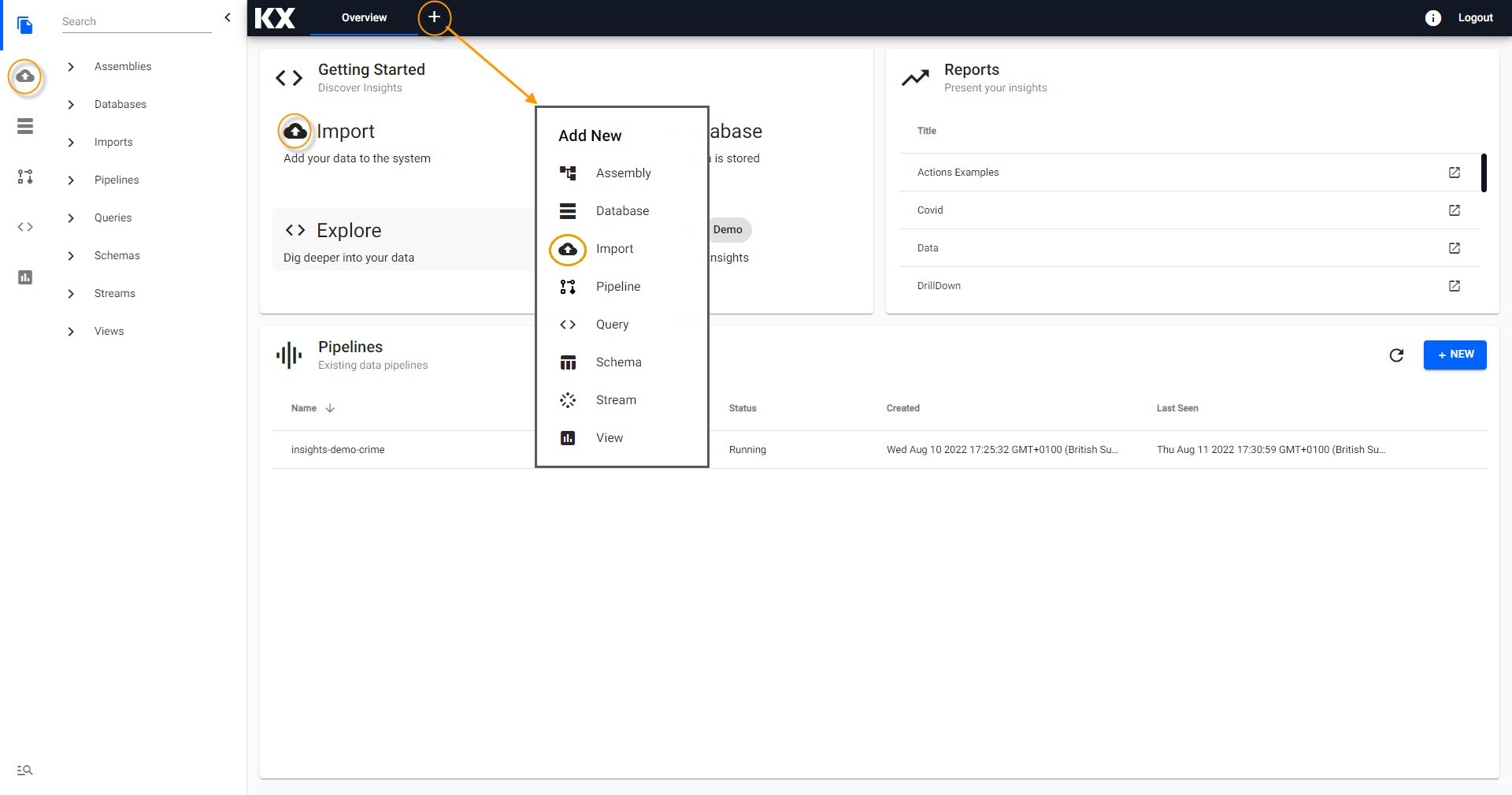

Creating the Pipeline

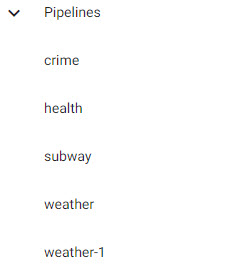

First select the import wizard; the import wizard can be accessed from the [+] menu of the Document bar or by clicking ![]() from the left-hand icon menu or Overview page. All created pipelines, irrespective of source, are listed under Pipelines in the left-hand entity tree menu.

from the left-hand icon menu or Overview page. All created pipelines, irrespective of source, are listed under Pipelines in the left-hand entity tree menu.

Access Import from the Overview page.

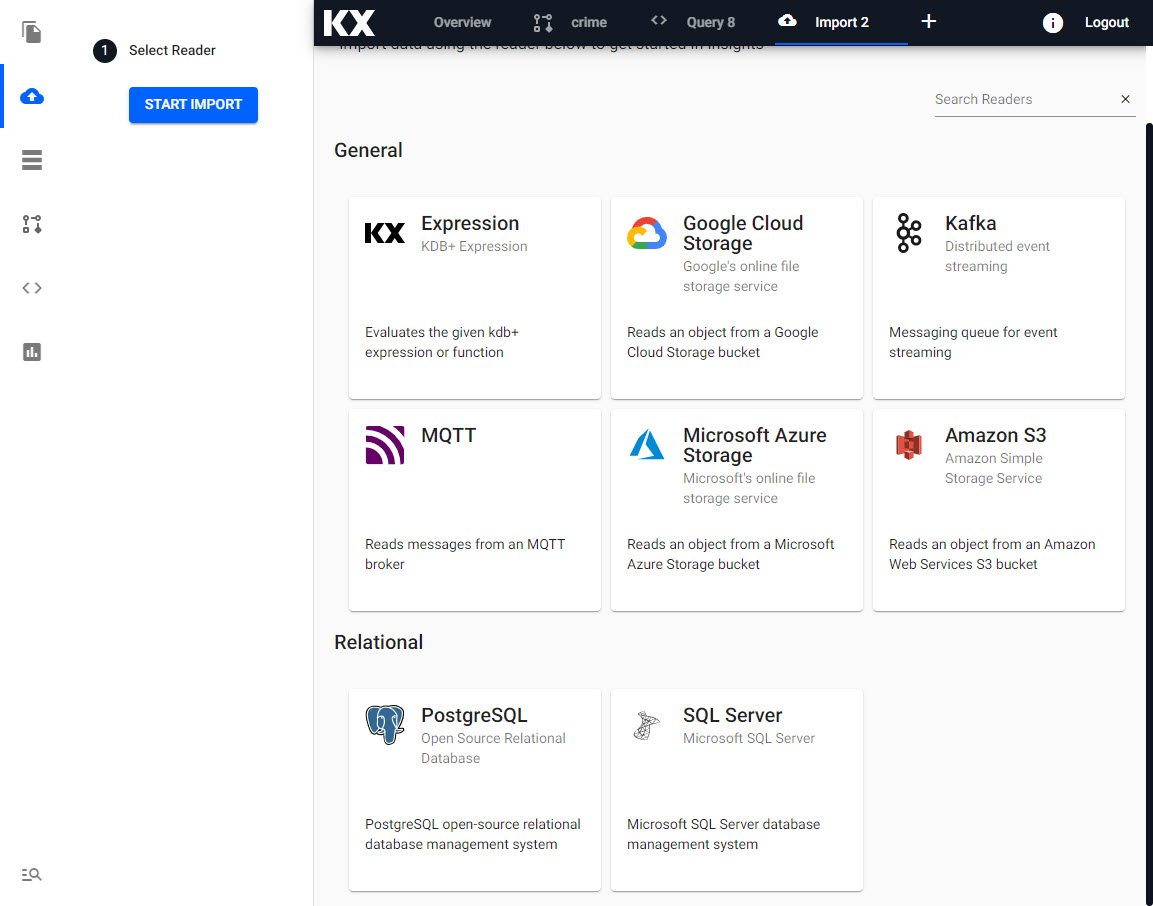

Select a Reader

Select one of the cloud providers and enter the relevant details for that provider from the import options:

Select one of Google Cloud Storage, Microsoft Azure Storage or Amazon S3 from available Import options.

Configure a Reader

Enter the relevant details for that provider into the first input form.

| setting | value |

|---|---|

| Path* | gs://kxevg/weather/temp.csv |

| Project ID | kx-evangelism |

| File Mode* | Binary |

| Offset* | 0 |

| Chunking* | Auto |

| Chunk Size* | 1MB |

| Use Authentication | No |

| setting | value |

|---|---|

| Path* | ms://kxevg/temp.csv |

| Account* | kxevg |

| File Mode* | Binary |

| Offset* | 0 |

| Chunking* | Auto |

| Chunk Size* | 1MB |

| Use Authentication | No |

| setting | value |

|---|---|

| Path* | s3://kxevangelism/temp.csv |

| Region* | eu-west-1 |

| File Mode* | Binary |

| Offset* | 0 |

| Chunking* | Auto |

| Chunk Size | 1MB |

| Use Authentication | No |

Click Next when done.

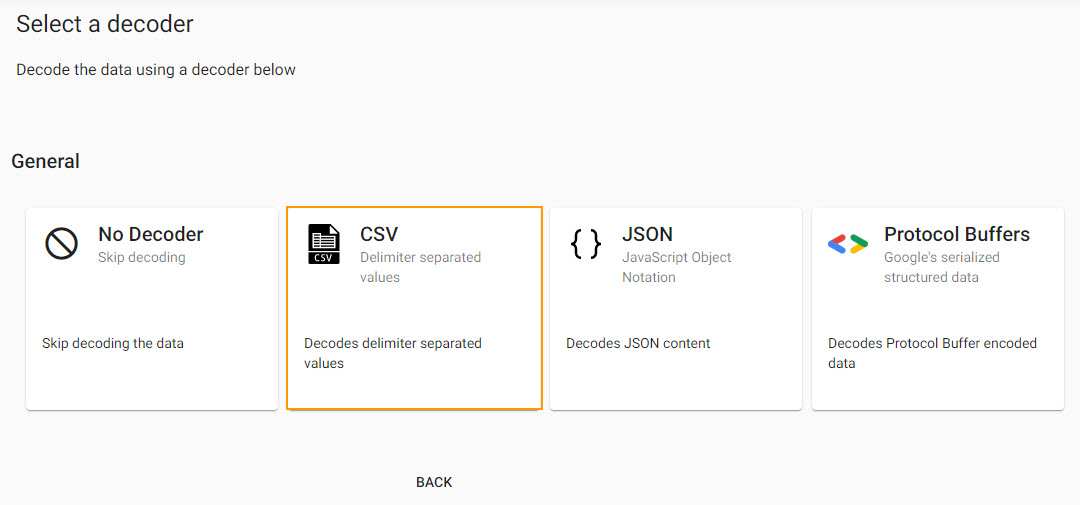

Select a Decoder

With the data source defined, next step defines a decoder which references the type of data we are importing. The source data is a csv file, so set the delimiter type - typically a comma for a csv file - and if the source data has a header row.

Select a CSV decoder.

| setting | value |

|---|---|

| Delimiter | , |

| Header | First Row |

Click Next when done.

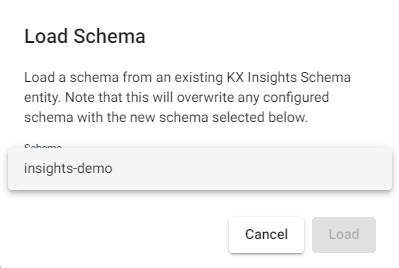

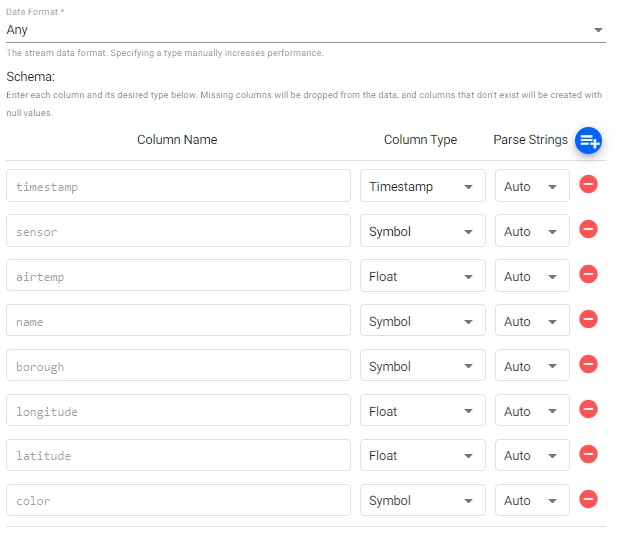

Configure Schema

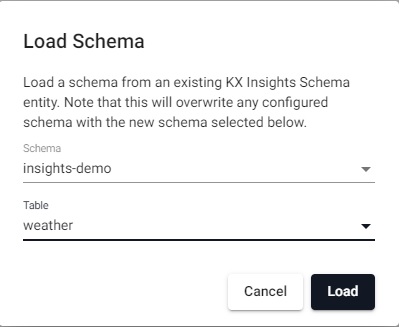

Imported data requires a schema compatible with kdb Insights Enterprise. The insights-demo has a schema table for each of the data sets available. The configure schema step applies the kdb+ datatypes used by the destination database and required for querying the data in kdb Insights Enterprise, to the data we are importing.

The next step applies a schema to the imported data.

| setting | value |

|---|---|

| Apply a Schema | Checked |

| Data Format | Table |

To attach the schema to the data set:

-

Leave the Data Format as the default value of

Any. -

Click

.

. -

Select the

insights-demoSchema from the dropdown.

Select theinsights-demoschema. -

Choose the Table

weather.

Select theweathertable from theinsights-demoschema. -

Leave Parse Strings set to

Autofor all fields.

Ensure Parse Strings are set toAutofor all columns.

Click Next when done.

Configure Writer

We will use the insights-demo database and assign the imported data to the weather table.

| setting | value |

|---|---|

| Database | insights-demo |

| Table | weather |

| Deduplicate Stream | Yes |

Click  when done. This will complete the import and open the pipeline in the pipeline viewer

when done. This will complete the import and open the pipeline in the pipeline viewer

Writer - KX Insights Database

The Writer - KX Insights Database node is essential for querying data in a pipeline. This node defines which assembly and table to write the data too. As part of this, the assembly must also be deployed; deployments of an assembly or pipeline can de done individually, or a pipeline can be associated with an assembly and all pipelines associated with that assembly will be deployed when the latter is deployed.

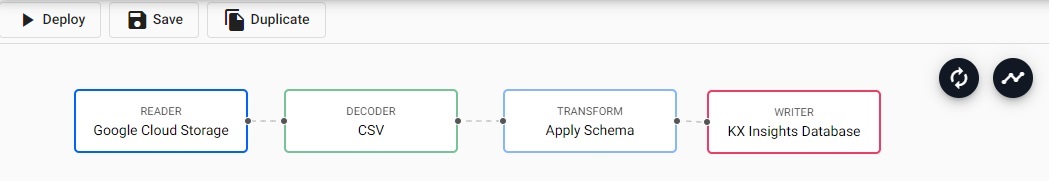

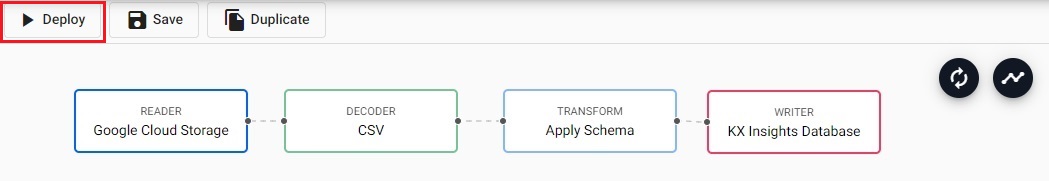

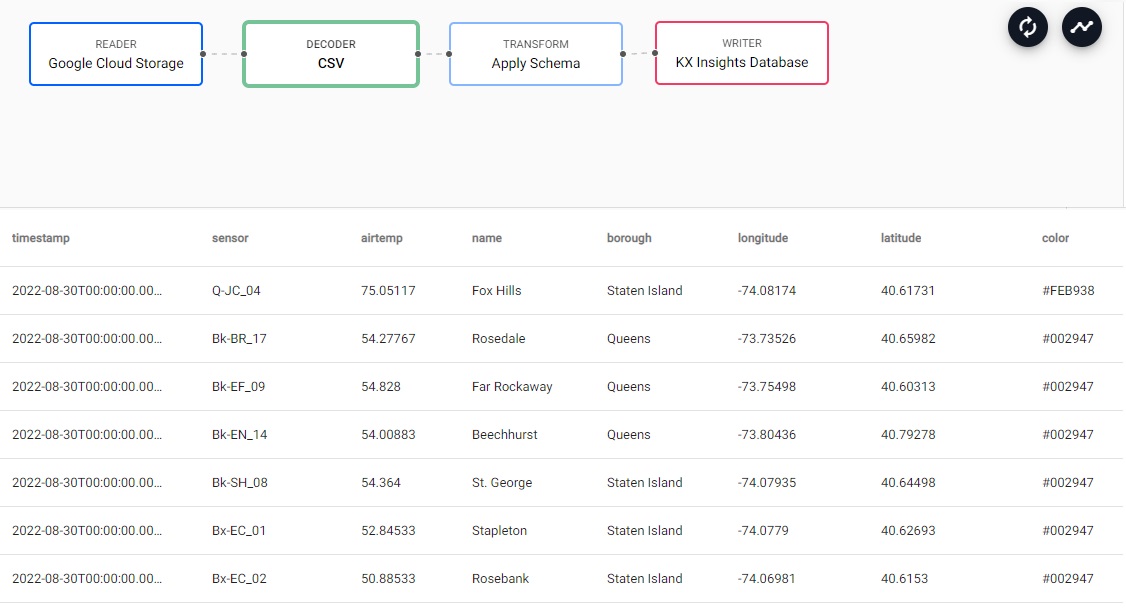

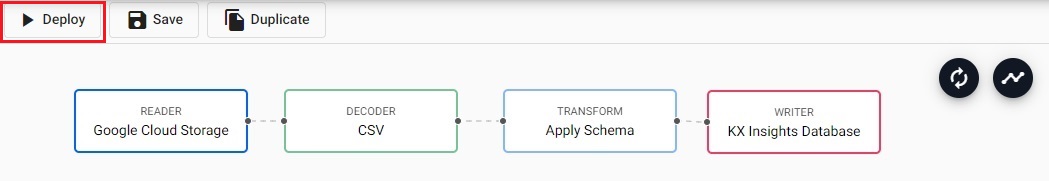

Pipeline view

The pipeline view allows you to review and edit your pipeline by selecting any of the nodes.

A completed weather pipeline using the Google Cloud Storage reader.

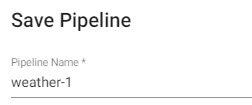

Click Save and give the pipeline a name. This name should be unique to the pipeline; for Free Trial, there is a pipeline already named weather, so give this new pipeline a name like weather-1.

Saving the pipeline name as weather-1.

The newly created pipeline will now feature in the list of pipelines in the left-hand-entity tree.

The newly created pipeline added to the list of available pipelines in the left-hand entity tree menu.

Testing the pipeline

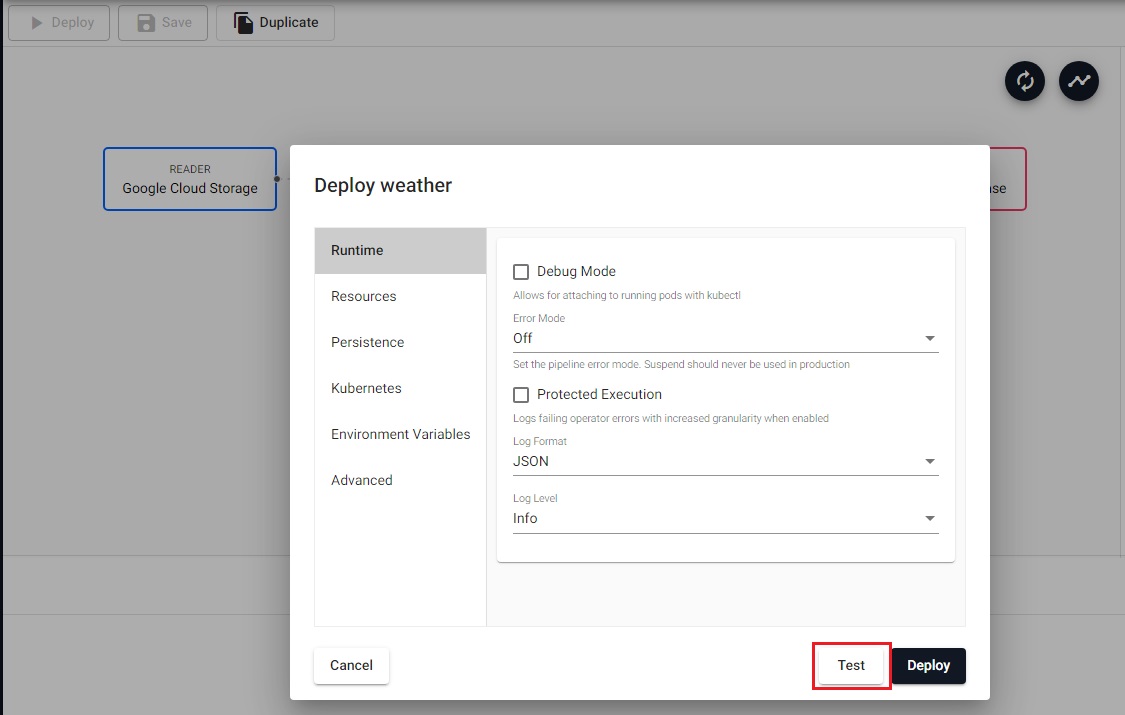

The next step is to test deploy the pipeline that has been created in the above Importing stage.

To start a test deploy of the pipeline, first click Deploy.

-

Select Deploy.

-

Select Test on the the Deploy screen.

Click Test deploy in the deployment dialog.

This will deploy your pipeline in a test mode, collect some of the data from the reader and run it through the steps prior to the writedown to the database.

Any errors that occur in the writedown phase will not be picked up here and will only occur when the pipeline is deployed.

-

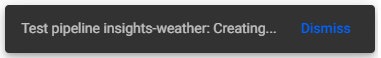

It is recommended to stay on this tab until the test completes. Assuming you have defined the pipeline correctly the following status popups will appear:

- Test pipeline

: Creating...

Creating test pipeline message.- Test pipeline

: Initializing contoller...

Pipeline initializing controller.- Test deploy running. Waiting for trace data...

Waiting for trace data.- Test deploy succeeded without errors.

Succeeded test deployment without errors. - Test pipeline

-

Once the

Test deploy succeeded without errorspopup has been displayed you can check the results and any erroring nodes will be highlighted. Clicking on a node will display a table in the bottom panel, populated with the output from the test.

A successful test will populate the lower panel with the results from the node at that step of the pipeline.

The panel is not populated for Writer nodes.

I want to learn more about Pipelines

Deploying the pipeline

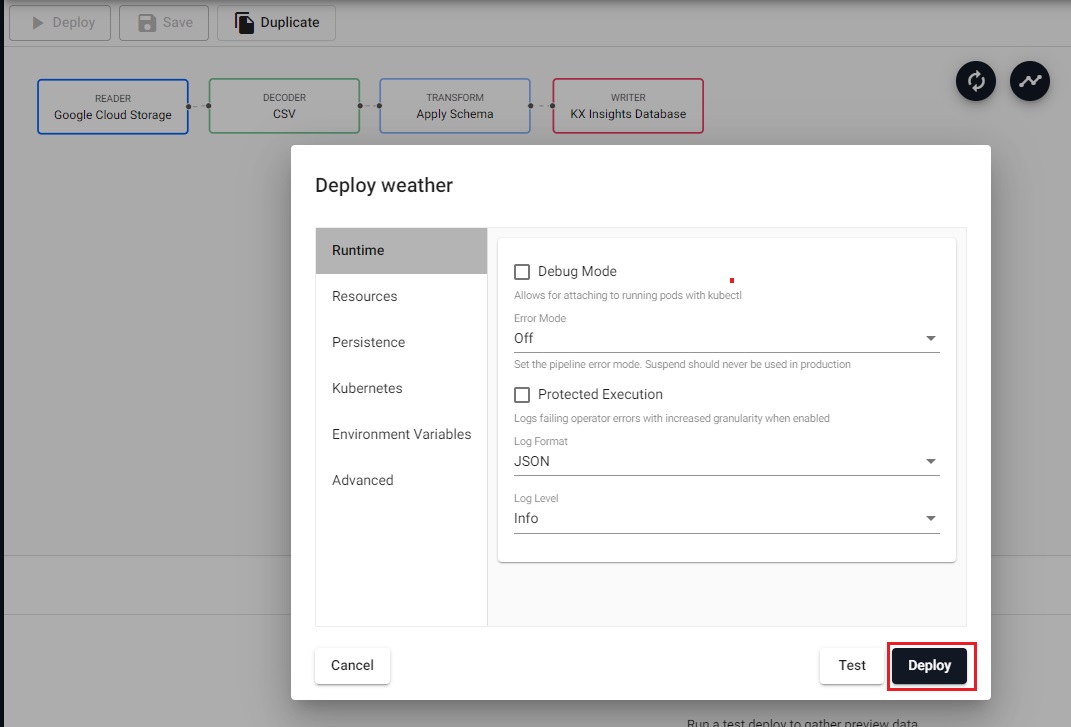

The next step is to deploy your pipeline:

Deploy the weather pipeline.

-

If you did not follow the

Teststep, or made changes since you last saved, you need to Save your pipeline. -

Select Deploy.

-

On the Deploy screen that appears select Deploy.

Deployment dialog; clickDeploy- no other changes are required here. -

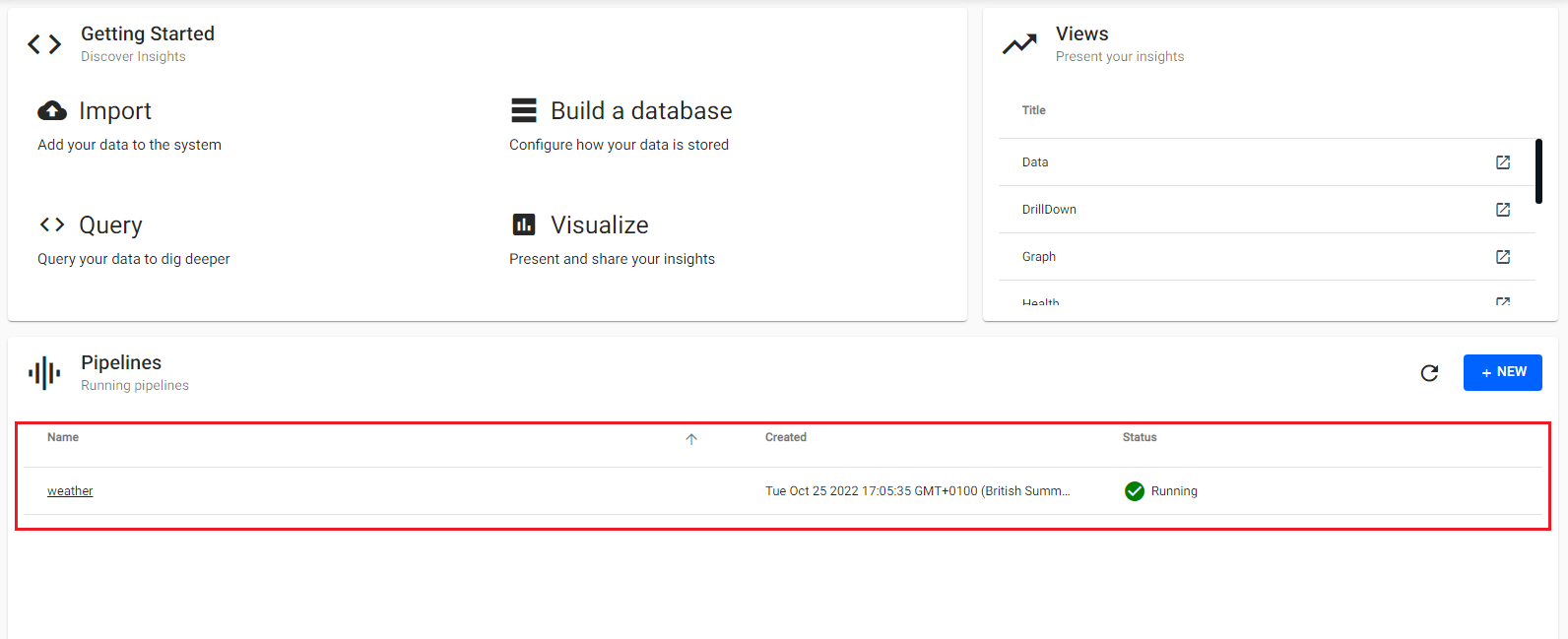

Once deployed you can check on the progress of your pipeline back in the Overview pane where you started.

-

When it reaches

Status=Runningthen it is done and your data is loaded.

A successfully deployed weather pipeline will be shown as running with a green circle tick next to it.

I want to learn more about Pipelines

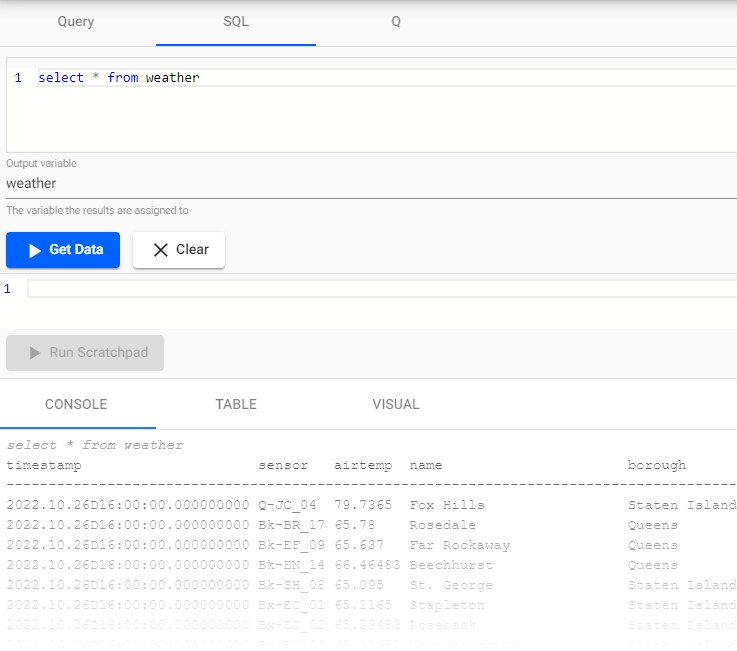

Querying

Select Query from the Overview panel to start the process of querying your loaded data. See here for an overview of the Query Window.

In the Query tab there are a few different options available to you, Query, SQL and q. Let's look at SQL.

You can run the following SQL to retrieve all records from the weather table.

SELECT * FROM weather

Note that you will need to define an Output Variable and then select Get Data to execute the query.

A successful SQL query for the weather table from insights-demo.

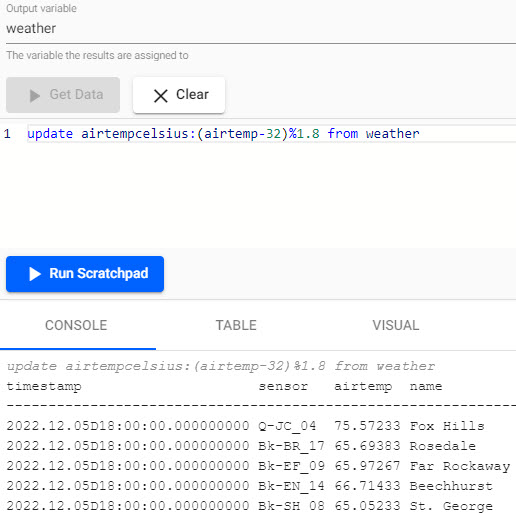

Now that we have outputted the weather table, we can see the airtemp column is in Fahrenheit. To add a new column which shows the temperature in Celcius, we can run the following code:

Output variable is named weather

update airtempcelsius:(airtemp-32)%1.8 from weather

-

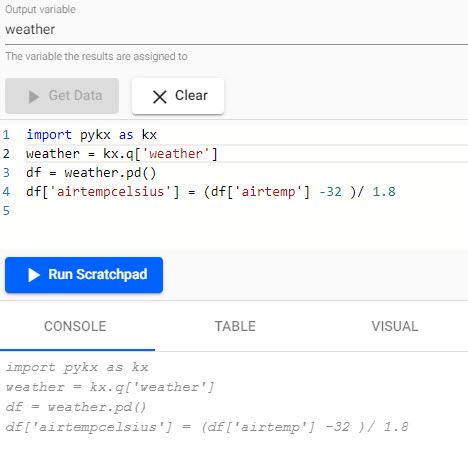

Add the airtempcelsius column:

import pykx as kx weather = kx.q['weather'] df = weather.pd() df['airtempcelsius'] = (df['airtemp'] -32 )/ 1.8

-

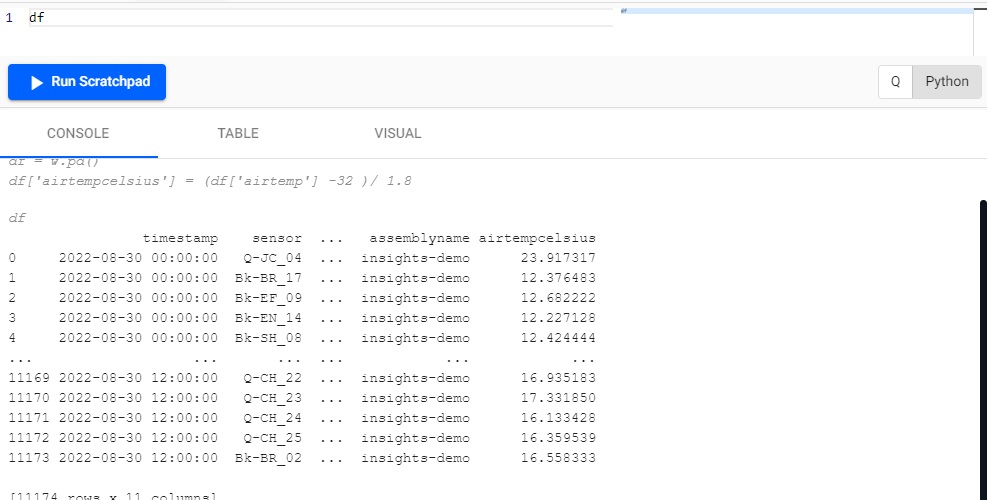

Display the results:

df

Tip

Run Scratchpad executes the whole scratchpad. The current line or selection can be run with the keyboard shortcut of CTRL + Enter, or ⌘Enter on Mac.

We can see that a new column is created called airtempcelsius!

Refresh Browser

If the tables are not appearing in the Query tab as expected - it is a good idea to refresh your browser and retry.

Troubleshooting

If the tables are still not outputting after refreshing your browser, try our Troubleshooting page.

Let's ingest some more data! Try next with the live Kafka Subway Data.